Should you be using AI to manage your health in 2026?

ChatGPT, Claude, and other AI language models are increasingly used for health questions, from interpreting symptoms to researching supplements, and they can be incredibly powerful in doing this.

But there’s a critical difference between using AI to build your understanding and using it to replace your judgement. This guide shows you how to prompt AI tools effectively for health decisions without outsourcing your thinking, to get the best results and educate yourself in the process.

Language models like ChatGPT are now part of how people think about health.

Used well, they reduce cognitive load and help you ask better questions. Used poorly, they quietly replace your judgement with something that sounds confident but has no skin in the game.

The difference isn’t about tech literacy, it’s about using these tools in the most productive way, that supports you as a human being to learn, grow and have the best health possible.

Here are the principles we use, with practical examples of how to apply them.

1. The Golden Rule: Treat AI as your research assistant, not your doctor

Here’s the simplest way to think about using AI for health:

Treat it like a researcher helping you prepare for a meeting with your doctor, not as the doctor themselves.

This changes everything about how you prompt.

Wrong framing (AI as doctor):

- “What should I take for my symptoms?”

- “Diagnose what’s wrong with me”

- “Tell me if I need to see someone”

Right framing (AI as research assistant):

- “Help me understand what commonly causes these symptoms”

- “What questions should I ask my doctor about this?”

- “What information would be useful to track before my appointment?”

The research assistant model keeps you in the right relationship with the tool: it’s helping you prepare, organise your thinking, and understand your options, but you’re still the one making decisions, and your practitioner is still the one with expertise, examination capability, and accountability.

This principle underlies everything else in this article.

2. The ChatGPT Prompts That Matter Most: Questions That Build Your Capability vs Erode It

Remember the research assistant model: you’re not asking AI to make decisions, you’re asking it to help you understand what matters so you can make better decisions yourself.

Stop asking for answers. Start asking for frameworks.

Prompts that erode agency:

- “What should I take for X?”

- “What’s the best protocol?”

- “Just tell me what to do”

Prompts that build capability:

- “What factors do people typically consider when addressing X?”

- “What trade-offs exist between approaches A and B?”

- “What would signal that escalation is needed?”

- “What are common mistakes people make when interpreting this?”

The difference isn’t length or sophistication. It’s who remains in the decision-making seat.

The first set outsources judgement. The second builds it.

Examples:

Instead of: “What supplements should I take for fatigue?”

Try: “What are the common causes of fatigue? What would help me figure out which might be relevant?”

Instead of: “Is magnesium better than melatonin for sleep?”

Try: “What are magnesium and melatonin each doing for sleep? What makes them work differently?”

Instead of: “Should I take probiotics?”

Try: “What are probiotics actually addressing? What situations would make them more or less relevant?”

This simple shift changes everything. You stop collecting instructions and start building understanding.

Your research assistant can help you map the territory. You’re the one who decides where to go.

Starting point: You don’t need perfect prompts

The examples in this article range from simple to sophisticated—start where you are.

A simple shift works:

Instead of: “What should I take for sleep?”

Try: “What factors affect sleep? What would help me work out what’s relevant for me?”Instead of: “Give me a protocol for gut issues”

Try: “What commonly causes gut issues? How would I tell which might apply to me?”As you learn what matters, your prompts will naturally get more specific. The principle stays the same: ask your research assistant for understanding, not instructions.

In this guide: We’ll cover how context improves AI responses, when to escalate to a practitioner, and advanced prompting techniques for complex health situations.

3. Treat language models as mirrors, not authorities

They’re good at summarising and organising information, and undeniably, can offer some insights. They’re not good at knowing what’s true in the way a human does because they have no context, responsibility, or consequences as of the end of 2025.

If you catch yourself thinking “ChatGPT says…” rather than “Based on this, I think…”, that’s judgement being outsourced.

A good tool sharpens your thinking. A bad use of a tool replaces it.

4. How to Prompt AI with Context: Why Details Matter for Health Questions

Think about briefing a “junior” research assistant. The more relevant information you give them, the more useful their work becomes. You are managing your health, not the AI.

Generic questions get generic answers. Contextualised questions get responses that actually relate to your situation.

What context typically matters:

- Current symptoms and their patterns: Not just “fatigue” but “fatigue that’s worse in the afternoon, improves after eating”

- Relevant health history: Previous diagnoses, tests you’ve done, treatments you’ve tried

- What you’ve already tried: What worked, what didn’t, how you responded

- Your constraints: Dietary restrictions, previous intolerances, budget limitations

- Your timeline: How long has this been happening? Are you trying to address something acute or optimise?

Simple example:

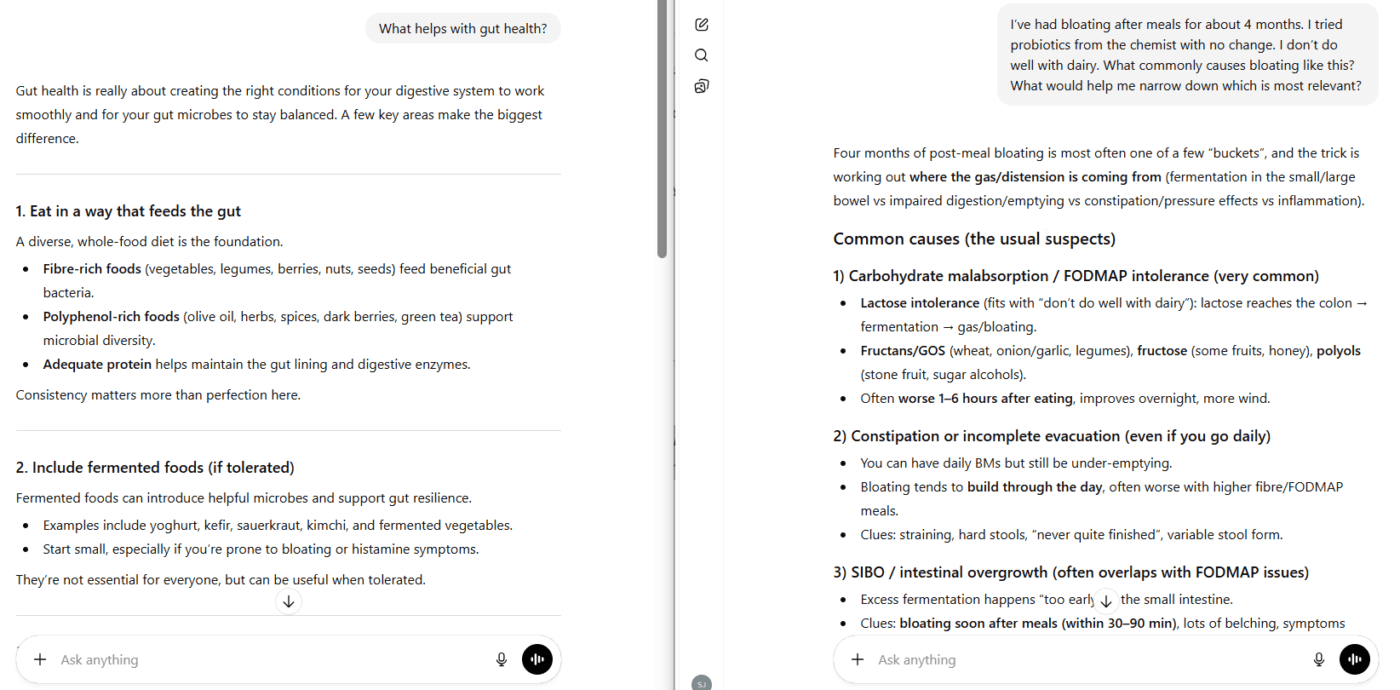

Generic prompt:

“What helps with gut health?”

You’ll get a generic list: probiotics, fibre, fermented foods. Your research assistant has no context to work with.

With basic context:

“I’ve had bloating after meals for about 4 months. I tried probiotics from the chemist with no change. I don’t do well with dairy. What commonly causes bloating like this? What would help me narrow down which is most relevant?”

Now your research assistant can consider:

- Your symptom (bloating after meals)

- Your timeline

- What didn’t work (generic probiotics)

- Your constraint (dairy intolerance)

The more context you provide, the more useful the research becomes.

5. Ask questions that build understanding, not answers to follow

Your research assistant should help you understand your options, not choose for you.

Simple examples with context:

For digestive symptoms:

“I get bloating about an hour after meals, especially bigger meals or fatty foods. It’s been about 4 months. What commonly causes this pattern? What would help me understand which is most likely for me?”

For sleep issues:

“I fall asleep fine but wake up around 3am and can’t get back to sleep. It’s been happening most nights for a few months. What usually causes this pattern? What would be worth tracking or observing?”

For energy problems:

“My energy crashes in the afternoon, especially if I skip lunch. I sleep okay but don’t wake up feeling refreshed. What patterns cause this? What would help me work out what’s going on?”

Notice these prompts:

- Include basic context (timing, pattern, duration)

- Ask for understanding rather than instructions

- Are simple enough that anyone can write them

- Give enough information to get a useful response

- Frame AI as research assistant, not decision-maker

Building on previous conversations:

If you’re having an ongoing conversation, you can reference what you discussed before:

“I tried the low-sugar breakfast approach we talked about. The afternoon energy crash is better but I’m still not waking up refreshed. What does that tell us? What would be worth looking at next?”

This lets your research assistant help you interpret your response to changes—which is often more valuable than the initial suggestion.

6. Use AI to slow you down, not speed you up

One of the hidden risks of AI is premature action.

When something feels urgent or confusing, speed can feel reassuring. But in health especially, fast answers are often worse than thoughtful ones.

Your research assistant is useful when they help you:

- Map the problem more clearly

- See what you don’t yet understand

- Identify what actually matters

They’re being misused when they:

- Push you toward buying things before the problem is clear

- Create urgency where patience would be wiser

- Make you feel productive without actually building understanding

Practical example:

You’ve been experiencing fatigue, brain fog, and digestive issues for months.

Poor approach:

“Give me a supplement protocol for fatigue, brain fog, and gut issues.”

This gets you a shopping list. You’ll feel like you’re taking action. But you’re no closer to understanding what’s actually happening.

Better approach (simple version):

“I’m 42 and dealing with fatigue (worse in mornings), brain fog (gets better after eating), and digestive issues (bloating, irregular bowel movements). This has been developing over about 18 months. I eat fairly clean and sleep okay, but don’t wake up refreshed. My GP checked my thyroid 6 months ago and said it was normal. What patterns commonly link these symptoms? What would help me work out which is most relevant?”

This slows you down in a useful way. You’re briefing your research assistant properly so they can help you build a map before choosing a route.

Follow-up prompts:

“Of those possibilities, the blood sugar one seems to fit my symptoms best. What would help me test if that’s what’s going on? What should I be tracking?”

“If I wanted to try managing blood sugar first, what does that typically involve? How long before I’d know if it’s helping? What would tell me I’m on the wrong track?”

These questions help you think systematically, with clear decision points. Your research assistant is helping you prepare, you’re still the one deciding.

7. Use AI to identify what you don’t know yet

A good research assistant shows you what questions you haven’t thought to ask – which is often more valuable than answering the questions you have.

But this only works when you provide enough context.

Simple prompts that reveal knowledge gaps:

Before investigating symptoms:

“I’ve been getting afternoon headaches 3-4 times a week for about 6 months. They’re dull pressure, not sharp pain. I’m 38, work at a computer, sleep is okay. What else would a practitioner typically want to know about headaches like this? What am I not thinking to track?”

Before choosing a test:

“I’m thinking about doing a gut test. I have chronic bloating and reflux that’s gotten worse over two years. Probiotics didn’t help. I don’t have severe pain but I’m uncomfortable most days. Would a gut test make sense for this? What would it tell me and what wouldn’t it tell me? What questions should I ask before deciding?”

Before starting something new:

“I’m thinking of trying magnesium for sleep and muscle tension. I tried a B-complex once and felt anxious and wired. What should I know before starting magnesium? What would tell me if it’s working or causing problems? What questions should I ask a practitioner if I go that route?”

These prompts help you recognise:

- What variables matter that you hadn’t considered

- What you’re currently blind to

- When you need more information before acting

- What your past responses tell you about how you might react

- What questions to bring to a practitioner

Your research assistant is preparing you for better conversations with actual expertise.

8. Build context deliberately over time

If you’re going to use AI regularly for health questions, build the context deliberately, like briefing a research assistant over multiple sessions.

You don’t need to share everything at once. Start with the basics, then add detail as you go.

Simple approach:

First conversation – establish basics:

“I’m 45, female, living in Melbourne. I have hypothyroidism (on 100mcg thyroxine for 10 years) and had IBS in my 20s that I mostly manage with diet. Right now I’m dealing with lower energy than I’d like, bloating after most meals despite being gluten and dairy free, and hair thinning over the past year. I sleep about 7 hours but don’t wake up refreshed.”

Next question – reference that context:

“Given what I shared about my thyroid and symptoms, could the hair thinning be thyroid-related? What would help me tell? What questions should I ask my doctor about testing beyond TSH?”

Build on it:

“I got my thyroid results back. TSH is 2.1 (my doctor says that’s fine), but I asked for the full panel. My fT4 is mid-range and fT3 is in the lower third. Does this fit with my symptoms of fatigue, hair thinning, and not waking refreshed? What would be useful to discuss with my doctor about this pattern?”

The advantage:

Your research assistant can start connecting patterns across your symptoms and responses, like a practitioner would over multiple appointments. But remember: they’re helping you prepare. The practitioner is still the one with expertise and accountability (or if you are doing it yourself, the responsibility lies with you!).

Important note: Most AI tools don’t remember between completely separate conversations unless you use their memory features (like ChatGPT’s memory or Claude’s memory). If you’re starting a new conversation, you may need ti include the relevant context again. It can also be good to save the conversation as a web browser bookmark so you can return to it later, or make use of ChatGPT or Claude “projects:”.

9. Know when your research assistant has done their job

There are clear moments where more research isn’t the answer:

- Symptoms are getting worse despite reasonable self-directed changes

- You’re more confused now than when you started

- You keep asking similar questions hoping for different answers

- You’re using AI to avoid seeing a practitioner when you know you should

- The amount of explaining required to get useful answers suggests this is beyond self-investigation

This is when your research assistant has done their job—they’ve prepared you to escalate to actual expertise.

Seeing a practitioner isn’t giving up. It’s often the smart next step.

Simple decision framework:

Ask yourself:

- Has my research assistant helped me get clearer, or am I just collecting more information?

- Would testing or professional assessment actually answer this, or am I hoping research will?

- Am I avoiding something I know I should do?

- Is this getting more complex instead of simpler?

- Do I have enough clarity now to have a productive conversation with a practitioner?

If the answers point toward avoidance or confusion—or if you’ve gotten as clear as self-investigation can get you—that’s when expertise matters more than information.

Example:

You’ve been working on digestive issues for 3 months with diet changes. You keep asking your AI research assistant variations of:

“I tried low-FODMAP and it helped a bit but symptoms are still there. Should I try XYZ next?”

If you’re asking increasingly specific questions but not getting meaningfully closer to feeling better—your research is done. More information isn’t helping. You’re ready for expertise.

Your research assistant has prepared you well. Now it’s time to escalate.

Responsibility never transfers to the AI

No system, AI or human, carries the consequences of your decisions except you.

That doesn’t mean doing everything alone. It means staying the one who decides when to act, when to wait, and when to get help.

A research assistant makes your decisions clearer. They don’t make the decisions for you.

Even with perfect context, your AI research assistant:

- Isn’t accountable for outcomes

- Can’t examine you

- Can’t order tests

- Doesn’t carry any risk if things go wrong

- Doesn’t replace the expertise of a practitioner

Context makes them a better research assistant. It doesn’t make them a substitute for expertise when expertise is actually needed.

Advanced ChatGPT Prompts for Health: When Simple Isn’t Enough

Once you’re comfortable with basic contextual prompting, you can give your research assistant more detailed briefs.

Advanced prompts include more specific detail:

Advanced digestive symptom prompt:

“I’m experiencing bloating 30-60 minutes after meals, particularly after larger meals or those with more fat. This has been happening for about 4 months. I haven’t had my gallbladder removed and I don’t have diagnosed SIBO, though I haven’t been tested. My bowel movements are generally normal but occasionally loose. No blood, no severe pain, no unintentional weight loss. I’m 40, female, no other diagnosed conditions, not on medications except occasional ibuprofen. What are the main categories of factors that can contribute to post-meal bloating with this pattern? For each category, what would help narrow down whether it’s relevant in my case? What would a practitioner typically want to know beyond what I’ve shared? What patterns would suggest it’s time to test rather than continue investigating on my own?”

Advanced lab interpretation prompt:

“I have a GI-MAP result showing elevated Klebsiella pneumoniae at 10^8, along with low Akkermansia muciniphila (below detection) and Faecalibacterium prausnitzii at 10^6 (reference range 10^9-10^11). I’ve had ongoing fatigue for 18 months—worse in the afternoon—and joint stiffness in my hands and knees that’s worse in the morning and improves with movement. No diagnosed autoimmune conditions but my mother has rheumatoid arthritis. I’m 38, eat a whole foods diet, limited processed foods. What contexts make elevated Klebsiella at this level clinically significant versus incidental? How does the pattern of low beneficial bacteria combined with my symptoms and family history change the interpretation? What questions should I be asking a practitioner about these results? What other markers would help confirm or challenge the significance? What would a practitioner typically want to know about my history or symptoms that I haven’t mentioned?”

Advanced supplement decision prompt:

“I’m considering starting methylfolate and methylB12 for support with chronic fatigue and difficulty recovering from exercise. I have MTHFR C677T homozygous (confirmed via 23andMe, verified with clinical testing). I tried a B-complex about 3 years ago (contained 400mcg folic acid and 100mcg B12) and within 2 days felt overstimulated, anxious, and had difficulty sleeping—symptoms resolved when I stopped. I’m 45, female, no other diagnosed conditions, currently taking magnesium glycinate 300mg at night and vitamin D 2000IU daily. What’s the mechanistic difference between supporting methylation with methylfolate versus folinic acid? Given my MTHFR genotype, previous adverse response to B-vitamins, and current supplement regimen, what factors influence which approach might be better tolerated? What would be a conservative starting approach? What should I be tracking to know if it’s working or causing problems? What questions should I ask a practitioner before starting this?”

What makes these advanced:

- Precise symptom characterisation: Timing, triggers, quality, duration, associated symptoms

- Comprehensive relevant history: Previous tests, diagnoses, treatments, responses, family history

- Specific exclusions: What you’ve ruled out, what you haven’t tested

- Current interventions: Everything you’re currently doing

- Detailed constraints: Specific intolerances, previous adverse reactions, medications

- Practitioner-oriented questions: “What should I ask my doctor?” keeps the research assistant in their proper role

When to use advanced prompts:

- When simple prompts have gotten you oriented but you need more precision

- When interpreting complex test results before discussing with a practitioner

- When you have a detailed history that’s directly relevant

- When you’re trying to distinguish between similar possibilities

- When previous interventions have given you information worth incorporating

- When you want to prepare thoroughly for a practitioner appointment

When not to use them:

- When you’re just starting to investigate something

- When you don’t yet have enough information to provide meaningful detail

- When simpler prompts would get you to the same place

- When the complexity is making you more confused rather than more clear

- When you’re using complexity to avoid admitting you need actual expertise

The progression:

Start simple → Get oriented → Build context → Add detail as it becomes relevant → Use advanced prompts when precision matters → Escalate to expertise if needed when your research is done

Most questions don’t need advanced prompts. But when they do, the additional context and specificity can help your research assistant prepare you more thoroughly for conversations with practitioners.

Remember: Even advanced prompting doesn’t replace expertise. It prepares you to use expertise more effectively.

Frequently Asked Questions

ChatGPT and other AI tools aren’t doctors and don’t provide medical advice. They’re useful as research assistants—helping you understand patterns, organize your thinking, and prepare for conversations with practitioners. They should never replace professional medical assessment.

Ask for frameworks rather than prescriptions. Instead of “What should I take for fatigue?” ask “What commonly causes fatigue? What would help me determine which is relevant?” This builds understanding rather than creating dependency.

AI can help you understand different supplements, their mechanisms, and trade-offs but shouldn’t make the decision for you. Use it to research and prepare questions, then make decisions based on your full context, history, and ideally with practitioner guidance.

Yes. The research assistant approach works across all AI language models – ChatGPT, Claude, Gemini, and others. The principles of asking for frameworks, providing context, and maintaining your judgement apply regardless of the specific tool.

When symptoms worsen despite self-directed changes, when you’re more confused than when you started, when you’re asking repetitive questions without progress, or when the complexity suggests you need professional assessment rather than more research.

Our position

AI can be genuinely useful when it helps people think more clearly, understand their options, and navigate complexity with confidence.

It becomes harmful when it creates dependency, loss of your individual agency, or the illusion of certainty.

We design our work, and any future tools, around that line. This article was written collaboratively with AI, using exactly the principles we describe: treating it as a research assistant that builds understanding, not one that replaces judgement.

The research assistant model is the difference.

When AI functions as a research assistant helping you prepare, and increasing your knowledge and broadening your way of thinking, it builds your up. When it’s treated as the perfect expert giving you all answers, it erodes judgement.

The quality of the model matters less than the role it plays in your decision-making. Whether you’re using ChatGPT, Claude, or another AI tool, the research assistant model keeps you in the right relationship with the technology.

If you’re working through a complex health situation and want support that keeps you in the driver’s seat while providing real expertise, you may also want to explore our Health Unlimited program.